|

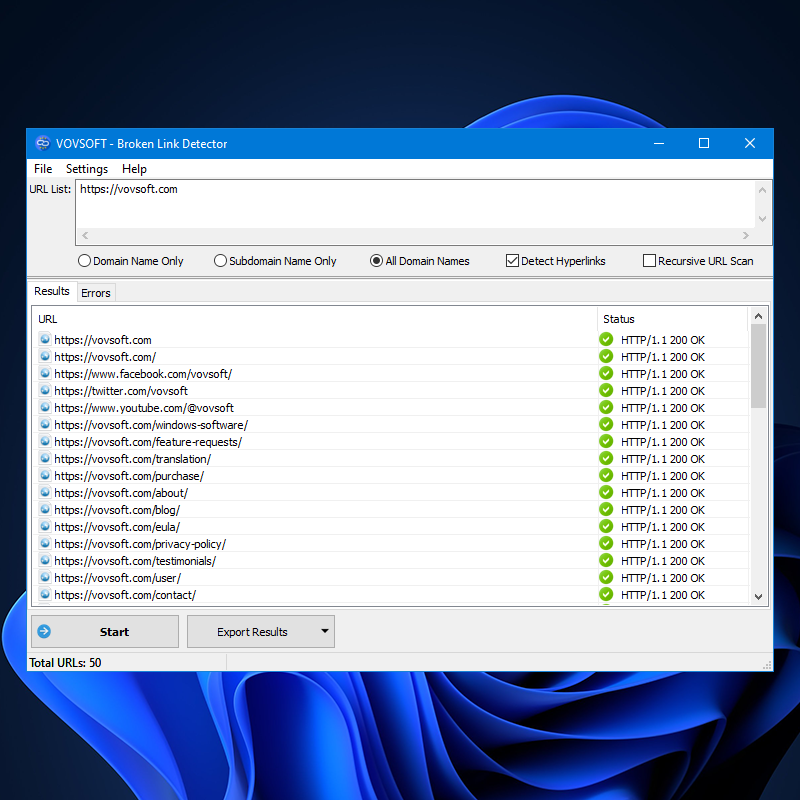

Once ready, the tool begins scraping the site data. Select one that suits your needs, input the domain or URL, and use the “Get all links” button. The URL option, however, is more suitable if you primarily need data for a specific page, including all its references and associated data, such as status codes. Choosing the domain option is beneficial if you want to extract all links from a website and identify any existing link issues. Sitemap files generally contain a collection of URLs on a website along with some meta-data for these URLs. If the site has URL query parameters, server-side. There are two methods to extract links from website, namely by domain or by search on a specific page. Notably, since this returns a list of files, not URLs, this would only really work for sites that are collections of static HTML files.

It provides a wealth of data, ranging from status codes to anchor text and nofollow statuses. The URL unpacker is highly beneficial, as it enables you to analyze a bulk of URLs rather than checking them individually. It’s also handy for testing newly published landing pages for broken or incorrect references. First one I found has a nice text output. The website all URL finder feature can find all links on a website and detect various link issues, while explaining how to resolve them. 8 Answers Sorted by: 92 I didn't mean to answer my own question but I just thought about running a sitemap generator. Python is used for a number of things, from data analysis to server programming. It has a great package ecosystem, there's much less noise than you'll find in other languages, and it is super easy to use. For instance, evaluating the quantity of external and internal links on a webpage, verifying the status of links, or generating sitemaps. Python is a beautiful language to code in. Use this multifaceted tool for various tasks. As it seemed strange to me that the root site collection would always be the first element in the SPWebApplication.Sites collection I decided to do a quick test. It includes a thorough SEO audit (both on-page and off-page), rank tracking, and site monitoring, along with other additional features.Ĭases When Page or Domain URL Extractor is Needed Our holistic SEO platform offers more than just link analysis.Provide continuous tracking and monitoring capabilities, ensuring 24/7 attention including backlinks tracking, and preserving a complete log of changes and errors.Detect all link-related issues, curate an exhaustive list of troublesome URLs, and provide straightforward solutions for these problems.Collect comprehensive data for every URL on your website, including internal links, internal backlinks, Internal backlinks anchors, and external outbound links.You can reduce the portion of the route that is client-side rendered by wrapping the component that uses useSearchParams in a Suspense boundary.The link extractor tool serves to grab all links from a website or extract links on a specific webpage, including internal links and internal backlinks, internal backlinks anchors, and external outgoing links for every URL on the site. This allows a part of the page to be statically rendered while the dynamic part that uses searchParams is client-side rendered. If a route is statically rendered, calling useSearchParams() will cause the tree up to the closest Suspense boundary to be client-side rendered. The null value is for compatibility during migration since search params cannot be known during pre-rendering of a page that doesn't use getServerSideProps

If an application includes the /pages directory, useSearchParams will return ReadonlyURLSearchParams | null.useSearchParams is a Client Component hook and is not supported in Server Components to prevent stale values during partial rendering.Learn more about other read-only methods of URLSearchParams, including the getAll(), keys(), values(), entries(), forEach(), and toString(). URLSearchParams.has() : Returns a boolean value indicating if the given parameter exists. URLSearchParams.get() : Returns the first value associated with the search parameter. UseSearchParams returns a read-only version of the URLSearchParams interface, which includes utility methods for reading the URL's query string: UseSearchParams does not take any parameters.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed